Anthropic has confirmed that it accidentally exposed part of the internal source code of its AI-powered coding assistant, Claude Code, during a recent software release.

The company acknowledged that the leak occurred due to a release packaging issue caused by human error, not a cyberattack.

A spokesperson said no customer data or credentials were exposed in the incident, stressing that it was not a security breach.

The issue surfaced when a version of Claude Code was briefly published with internal files included.

How code was exposed

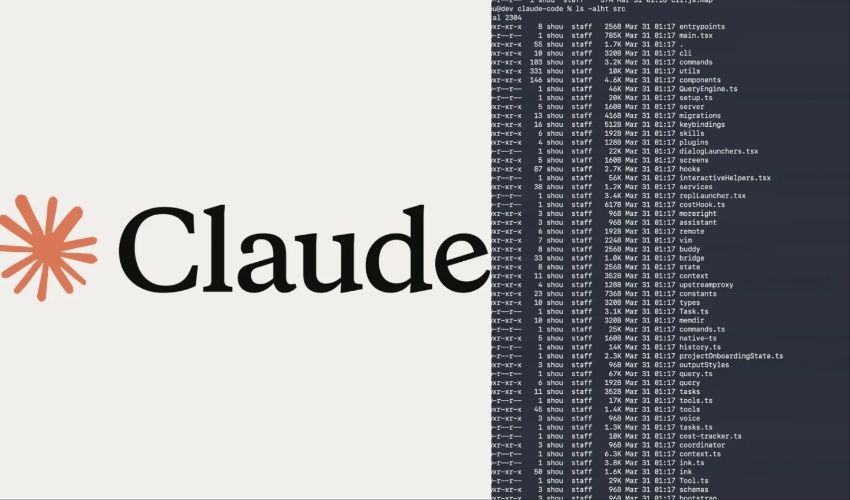

The leak reportedly occurred when version 2.1.88 of Claude Code was uploaded with a large .map debugging file.

This file contained embedded source data, allowing developers to reconstruct the full codebase.

As a result, nearly 1,900 files and around 500,000 lines of code became accessible online.

The exposed code was first identified by developer Chaofan Shou and quickly spread across platforms like GitHub.

A related post on X gained massive traction, reaching millions of views within hours.

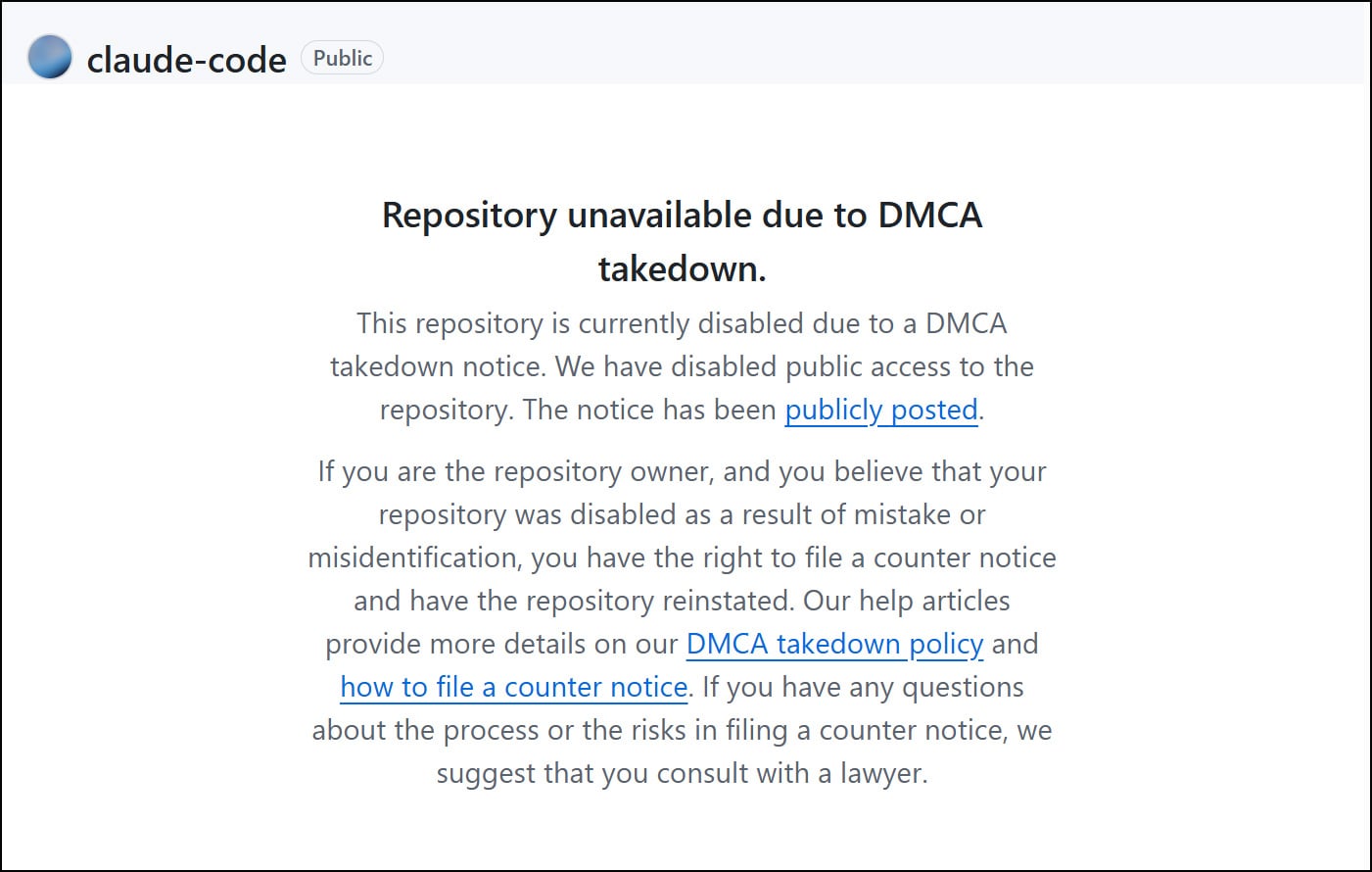

Anthropic has since begun issuing DMCA takedown notices to limit further distribution.

Rivals gain insight into Claude code

The leak could provide competitors with a deeper understanding of how Claude Code operates.

Although the exposed files do not include core AI model architecture, they offer insights into features and system design.

Developers have already started analyzing the code to uncover undocumented capabilities.

Early analysis of the leaked code suggests that Anthropic has been testing new features.

These include a “Proactive mode”, where the AI could continuously write code, and a “Dream mode”, allowing it to process ideas in the background.

These features were not officially announced prior to the leak.

Timing amid rapid growth

The incident comes during a period of growth for Anthropic, especially following its split from the Pentagon earlier this year.

After the dispute, the company’s chatbot gained popularity, briefly topping app store rankings in the United States.

Separately, Anthropic confirmed it is investigating a bug affecting usage limits in Claude Code.

Users reported hitting limits far quicker than expected, an issue acknowledged by company representative Lydia Hallie.

The company said the problem remains a top priority and is still unresolved.

.jpg)